Key Takeaways

- Trust determines adoption. Human operators often ignore or override AI systems in manufacturing compliance without clear explanations.

- Explainable AI connects predictions to operational causes. Engineers need to know which variables triggered a decision before they can act on it.

- Transparency strengthens regulatory readiness. Documented AI reasoning helps manufacturers defend decisions during audits and compliance reviews.

- Simple models sometimes outperform complex ones in regulated environments. Slightly lower accuracy is often acceptable if the decision logic is understandable.

- Explainability works best alongside human expertise. AI highlights risks, explanations clarify them, and domain experts make the final call.

Manufacturing companies have spent decades improving their compliance processes. Environmental standards, product safety regulations, financial reporting rules, supplier governance frameworks—none of these are new. What is new is the growing role of AI in monitoring, predicting, and even enforcing those rules.

Yet a strange tension has appeared.

AI can detect anomalies in production data faster than any human team. It can identify supplier risks, forecast regulatory exposure, and flag compliance violations before they escalate. But when an AI model produces a recommendation—reject this supplier batch, halt this production run, or flag this emissions report—manufacturing leaders often ask a simple question:

Why? If the answer is unclear, the system becomes difficult to trust.

This is precisely where explainable AI becomes critical. In regulated manufacturing environments, AI systems cannot operate as black boxes. They must provide reasoning, context, and traceability—especially when compliance decisions are involved.

Explainability isn’t just a technical enhancement. Often, it is the difference between AI being adopted or rejected in compliance-heavy industries.

The Trust Problem in AI-Driven Compliance

Manufacturing compliance is inherently conservative. Regulatory frameworks like FDA guidelines, ISO standards, and environmental reporting mandates leave very little room for ambiguity. Decisions must be documented, audited, and defensible.

Traditional rule-based systems worked well in this environment because they were predictable. If a production temperature exceeded a predefined threshold, the system triggered an alert. The rule was visible, and the logic was easy to verify.

AI changes that equation. Machine learning models detect patterns across thousands of variables—sensor readings, machine telemetry, supply chain signals, maintenance records, and more. The outputs may be accurate, but the reasoning often sits inside complex statistical relationships.

Imagine a scenario: A predictive model flags a batch of pharmaceutical packaging as non-compliant with contamination risk thresholds. The quality manager asks:

What triggered the alert?

If the system simply says, “Model confidence: 92%”, that answer is not operationally useful.

Compliance teams need clarity, such as:

- Which sensor readings contributed most to the decision?

- Was the anomaly linked to temperature drift, humidity variation, or operator intervention?

- Did similar patterns appear in past regulatory violations?

Without explanations, the model becomes difficult to defend during audits or regulatory investigations. And that’s where explainable AI manufacturing approaches are beginning to reshape AI deployment strategies across factories.

Also read: Explainable AI in Credit Risk Assessment: Balancing Performance and Transparency

Why Manufacturing Cannot Tolerate Black-Box Models

Consumer tech often tolerates opaque AI decisions. A recommendation algorithm suggesting the wrong movie is inconvenient—but not catastrophic.

Manufacturing operates under different stakes.

Compliance violations can lead to:

- product recalls

- regulatory penalties

- halted production lines

- environmental reporting failures

- safety incidents

Because of this, AI recommendations must be explicable enough to withstand scrutiny from regulators, auditors, and internal risk teams. There are also practical reasons.

Manufacturing operations involve cross-functional decision-making. A single compliance alert might require collaboration between:

- production engineers

- quality assurance teams

- regulatory specialists

- supply chain managers

If AI outputs cannot be interpreted by these stakeholders, they quickly become sidelined. Transparency becomes less of a philosophical goal and more of an operational requirement.

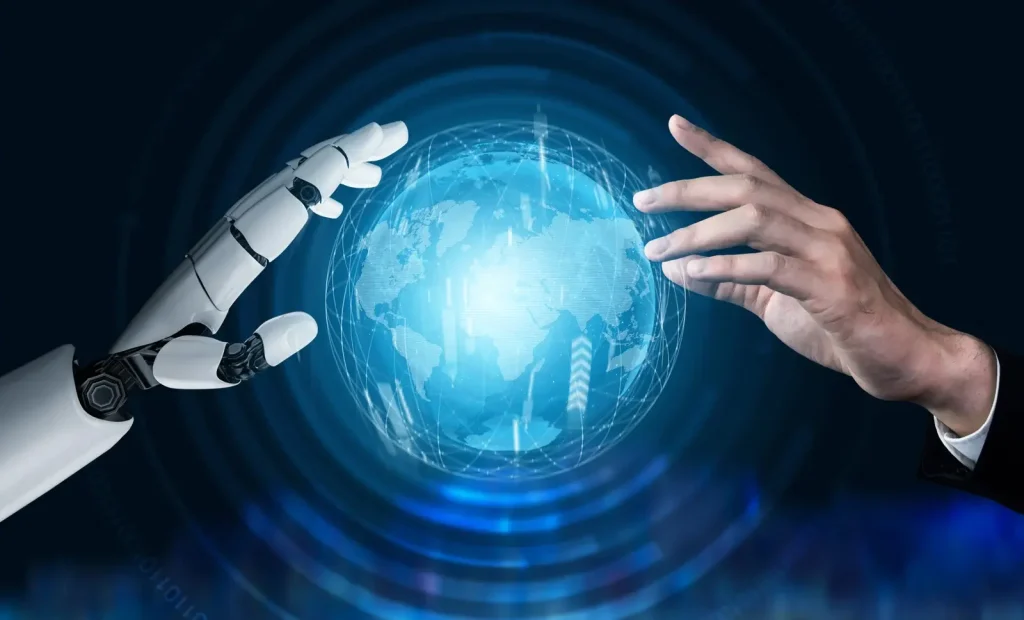

Where Explainable AI Fits Inside Manufacturing Compliance

Explainability becomes particularly important in certain high-impact areas of industrial operations.

1. Quality Control and Defect Analysis

Computer vision systems are increasingly used to inspect products on production lines. These models identify microscopic defects or anomalies far faster than manual inspectors.

However, regulators often require traceable justification for rejecting product batches.

Explainable AI techniques can highlight which image regions triggered the defect classification. Instead of simply labeling an item as defective, the system visually identifies contributing features—surface scratches, dimensional variance, or material inconsistencies.

That level of detail helps engineers validate the decision.

2. Environmental Compliance Monitoring

Manufacturers must track emissions, energy usage, and waste discharge under strict regulatory thresholds.

AI models can predict environmental compliance risks by analyzing historical plant data alongside operational conditions. But again, regulators expect clarity.

Explainability helps answer questions like:

- Did emission forecasts increase because of furnace temperature variation?

- Was a supplier material change responsible for higher pollutant output?

- Did equipment wear patterns contribute?

Transparency matters not just for reporting but for corrective action.

3. Supply Chain Risk Detection

Supplier compliance has become a major regulatory focus, especially with expanding ESG and sustainability mandates.

AI systems now evaluate supplier risk signals such as delivery inconsistencies, financial instability, or labor compliance indicators.

But procurement teams rarely act on risk scores alone.

They want insight into why a supplier was flagged.

Explainable AI surfaces contributing factors such as:

- irregular shipment patterns

- inconsistent quality reports

- regulatory filings indicating operational disruption

Without these explanations, supplier relationships can be damaged by unexplained AI judgments.

Techniques Behind Explainable AI

Explainability is not a single technology. It’s a combination of modeling choices, interpretability techniques, and visualization strategies.

Some of the most commonly used approaches include:

1. Feature Importance Analysis

This technique identifies which input variables had the greatest influence on a model’s prediction.

In manufacturing compliance scenarios, this analysis might reveal that:

- pressure fluctuations were the primary predictor of equipment failure

- specific material properties triggered defect classification

- operator shift patterns correlated with safety incidents

Feature importance provides engineers something tangible to investigate.

2. SHAP and Local Explanations

Methods like SHAP (Shapley Additive Explanations) help illustrate individual predictions, not just model behavior overall.

This matters because compliance decisions often occur at the case level. A regulator reviewing a rejected batch may ask, ‘Why was this specific batch flagged?’ Local explanation techniques provide that answer.

3. Visual Interpretability

In computer vision models used for defect detection, visual heatmaps show which image regions influenced the classification.

This type of explanation resonates strongly with engineers because it connects model reasoning to physical product characteristics.

4. Rule Extraction

Some organizations use hybrid models that combine machine learning with understandable rule sets.

For example, a predictive compliance system might produce outputs like “Emission risk increased due to a combination of furnace temperature > threshold AND material composition variance.”

These rule-like explanations are easier for compliance teams to validate.

Why Explainability Sometimes Fails in Real Factories

Despite the progress in explainability techniques, real-world implementations are rarely straightforward.

One of the major misconceptions is that explainability automatically builds trust.

It doesn’t. Trust emerges when explanations are both technically sound and operationally meaningful.

In practice, problems often appear.

1. Too Much Technical Detail

Some explanation tools produce outputs that only data scientists can understand. A production supervisor doesn’t benefit from a chart showing Shapley values across 200 variables. They want a clear narrative: “The model flagged this batch because temperature drift exceeded normal patterns during the curing phase.” If explanations remain too abstract, adoption stalls.

2. Misleading Explanations

Explainability tools interpret models—they don’t always perfectly reflect how the model truly behaves.

In high-risk environments, misleading explanations can create false confidence. Manufacturers deploying explainable ai manufacturing systems must validate explanations as rigorously as they validate predictions.

3. Operational Complexity

Factories produce enormous data volumes: IoT sensors, MES systems, ERP records, and machine telemetry.

Explaining predictions across such complex data environments can be computationally expensive. Real-time explainability—especially for streaming sensor data—is still an emerging capability.

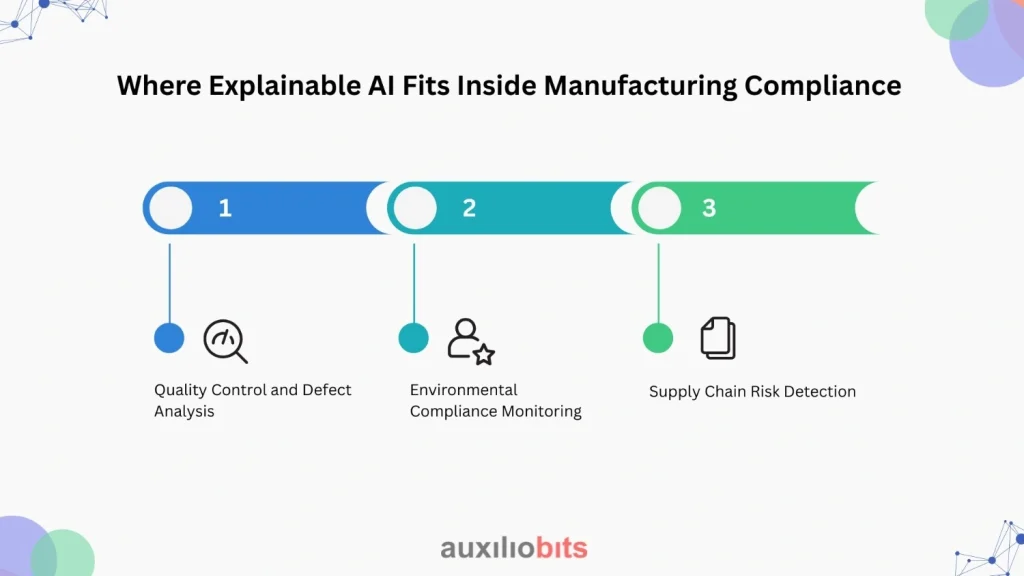

Designing AI Systems That Compliance Teams Will Trust

Technology alone won’t solve the explainability challenge.

Manufacturers that successfully deploy explainable AI tend to follow a few practical design principles.

1. Start With Interpretable Models Where Possible

Not every problem requires deep neural networks. In some compliance use cases, simpler models like gradient boosting or decision trees perform well—and are easier to interpret.

There’s a quiet industry shift happening here. Some manufacturers are deliberately sacrificing small increments of predictive accuracy in exchange for greater transparency.

And honestly, that trade-off often makes sense.

2. Integrate Explanations Into Operational Interfaces

Explanations should appear directly inside the dashboards or workflow systems that teams already use.

For example: A quality inspection dashboard might display:

- predicted defect probability

- key contributing factors

- similar historical cases

This keeps explanations actionable rather than theoretical.

3. Document Decision Trails

Compliance processes require auditability.

AI systems should log:

- model inputs

- predictions

- explanation outputs

- operator decisions

These records become invaluable during regulatory reviews. Without them, even accurate AI systems can become liabilities.

4. Combine AI With Human Oversight

Explainability does not eliminate the need for human judgment. Instead, it enables collaborative decision-making. In many manufacturing environments, the best approach looks something like this: AI identifies risk patterns, which are then explained to clarify the reasoning, and human experts validate or override the decisions. This structure maintains accountability while still benefiting from AI’s analytical capabilities.

Why Explainable AI Is Becoming a Regulatory Expectation

Regulators themselves are beginning to pay attention. Across industries, discussions around algorithmic accountability are accelerating.

Compliance teams are already encountering questions such as:

- Can you explain how this AI system makes decisions?

- Is the model bias-tested and documented?

- Are decision logs available for review?

Manufacturers deploying opaque AI tools may eventually face regulatory pressure to demonstrate explainability. Forward-thinking organizations are preparing now.

The Road Ahead for Explainable AI in Manufacturing

Explainable AI is still evolving, and frankly, it remains imperfect. Some models will always be complex. Some explanations will remain approximations. But the direction is clear.

Manufacturing companies are moving toward AI systems that justify their decisions, not just produce them.

Trust and transparency will determine whether AI becomes embedded in compliance workflows—or remains an experimental technology.

In practical terms, the future likely includes:

- AI models paired with real-time explanation engines

- audit-ready decision histories

- visual interpretability tools integrated into operational dashboards

- regulatory frameworks requiring explainability documentation

None of these measures eliminates the need for human expertise. If anything, explainable AI reinforces it. Because when an AI system explains its reasoning, the next step still belongs to the engineer, the quality manager, or the compliance officer who understands the factory floor better than any algorithm ever will.