Key Takeaways

- Carbon footprint automation is less about complex calculations and more about solving fragmented data ingestion across systems like ERP, IoT, and vendor reports.

- Multi-source ingestion becomes difficult because data comes in different formats, frequencies, and ownership structures, making standardization a real challenge.

- Automation without proper normalization can lead to inconsistent and unreliable outputs, as units, formats, and emission mappings may vary across sources.

- Instead of aiming for perfect automation, organizations benefit more from resilient systems that can handle missing data, delays, and exceptions effectively.

- The long-term success of Carbon Data Automation depends more on governance, clear ownership, and process alignment than on the choice of tools or technology.

There’s a quiet misconception in most sustainability conversations: that carbon reporting is a calculation problem. It isn’t. It’s a data problem—messy, fragmented, and often political.

Consider consulting anyone who has attempted to establish a carbon inventory within a manufacturing or logistics-intensive enterprise. The formulas are straightforward enough. Emission factors are published. Frameworks like the GHG Protocol are well established. Yet timelines slip, data quality degrades, and “automation initiatives” stall halfway through.

The friction almost always traces back to one thing: multi-source ingestion.

Not dashboards. Not analytics. Not even compliance. Just getting the data—accurately, consistently, and at scale. And that’s precisely where carbon data automation and broader carbon footprint automation efforts either succeed or quietly fall apart.

The Reality of Carbon Data: It Doesn’t Live Where You Expect

In theory, emissions data should sit neatly inside ERP systems or sustainability platforms. In practice, it’s scattered across:

- Utility bills sitting in vendor portals

- Fuel consumption logs maintained in Excel by plant managers

- IoT sensors streaming partial telemetry (but not always calibrated)

- Procurement systems track spending—but not always quantities

- Logistics providers sharing PDFs once a month (if that)

You don’t “pull” carbon data from a single system. You assemble it—piece by piece—from sources that were never designed to talk to each other. Even within the same organization, two plants might track the same metric in entirely different formats. One reports diesel usage in litres, another in cost. Someone else estimates it quarterly. None of them are technically wrong—but none of them are aligned either.

This is where most automation conversations start to oversimplify.

Why Multi-Source Ingestion Is Harder Than It Sounds

There’s a tendency to treat ingestion as a technical integration problem: connect APIs, map fields, and you’re done. That works for structured systems. Carbon data isn’t that cooperative.

1. Structured vs. Semi-Structured vs. “Whatever This Is”

Some data flows cleanly:

- ERP exports

- IoT streams

- Meter readings

Others don’t:

- PDF invoices from utilities

- Emails with attachments

- Vendor reports with inconsistent templates

And then there’s the gray area—files that look structured but change format every quarter. Automation struggles not because tools are lacking, but because consistency is lacking.

2. Frequency Mismatch

Not all data arrives at the same cadence:

- Energy usage: daily or real-time

- Supplier emissions: quarterly

- Travel data: monthly (and often delayed)

Trying to synchronize these into a unified carbon view introduces lag, gaps, and sometimes duplication. You can automate ingestion, but you can’t always automate timeliness.

3. Ownership Is Fragmented

Finance owns spend data. Operations owns fuel usage. Sustainability teams… own the outcome but rarely the data sources.

That creates subtle issues:

- Access delays

- Version conflicts

- “Shadow spreadsheets” maintained outside official systems

Tooling cannot fix unclear ownership. In fact, automation can amplify bad governance if you’re not careful.

What Carbon Data Automation Looks Like

When organizations get carbon data automation right, it rarely looks like a single platform rollout. It’s more of an ecosystem—stitched together with careful orchestration.

At a practical level, it involves three layers:

1. Ingestion Layer: Pulling from Everywhere

This is where multi-source ingestion becomes real:

- API connectors for ERP, procurement, and logistics systems

- OCR + AI pipelines for invoices and utility bills

- Email parsers extracting data from attachments

- IoT integrations capturing real-time energy consumption

But here’s the nuance: Not all sources deserve the same level of automation.

Some organizations over-engineer ingestion for low-impact data streams while ignoring high-impact manual processes (like supplier emissions).

A more pragmatic approach:

- Automate high-volume, repeatable sources first

- Standardize semi-structured inputs gradually

- Accept that some long-tail data will remain partially manual

Yes, that sounds less “fully automated”—but it works better in reality.

2. Normalization Layer: The Unseen Bottleneck

Collecting data is only half the battle. Making it usable is harder.

Consider something as simple as fuel consumption:

- Liters vs. gallons

- Cost-based estimates vs. actual usage

- Regional emission factors

Without normalization, your automated pipeline produces inconsistent outputs—just faster.

Effective carbon footprint automation includes:

- Unit standardization

- Data validation rules

- Context-aware transformations (e.g., geography-based emission factors)

This layer is often underestimated. It shouldn’t be.

3. Attribution and Mapping

Raw activity data doesn’t equal emissions. It needs to be mapped to:

- Scope 1, 2, or 3 categories

- Business units or cost centers

- Reporting frameworks

This is where domain knowledge matters. You can automate mappings, but only after defining them clearly—and that’s usually an iterative process. Teams often revisit mappings multiple times in the first year.

A Manufacturing Case: Where Things Usually Break

A mid-sized manufacturing enterprise attempted to automate its carbon data pipeline across five plants.

On paper, the architecture looked solid:

- ERP integration for procurement data

- IoT sensors for energy usage

- Vendor portal for logistics emissions

But within three months, issues surfaced:

- Two plants reported electricity usage differently (one net, one gross)

- Diesel consumption was partially estimated in one location

- Vendor emissions data arrived late—and sometimes not at all

The system wasn’t failing technically. It was failing contextually.

What eventually worked:

- Introducing validation checkpoints before ingestion

- Creating fallback estimation logic (with transparency flags)

- Standardizing reporting templates across plants

Interestingly, they scaled back automation in some areas before scaling it up again—once the data foundation improved. There’s a lesson in that. Automation doesn’t fix broken processes. It exposes them.

Where AI Actually Helps

There’s a lot of excitement around AI in carbon reporting. Some of it is justified. Some of it is… optimistic.

Where AI Adds Real Value

- Extracting data from unstructured documents (invoices, PDFs)

- Identifying anomalies in energy consumption patterns

- Suggesting emission factor mappings

These are high-friction areas where traditional rules-based systems struggle.

Where AI Falls Short

- Fixing inconsistent data definitions across teams

- Replacing missing data entirely

- Resolving ownership disputes

In other words, AI enhances carbon data automation, but it doesn’t replace the need for governance.

Also read: Marketing Automation for Long Manufacturing Sales Cycles

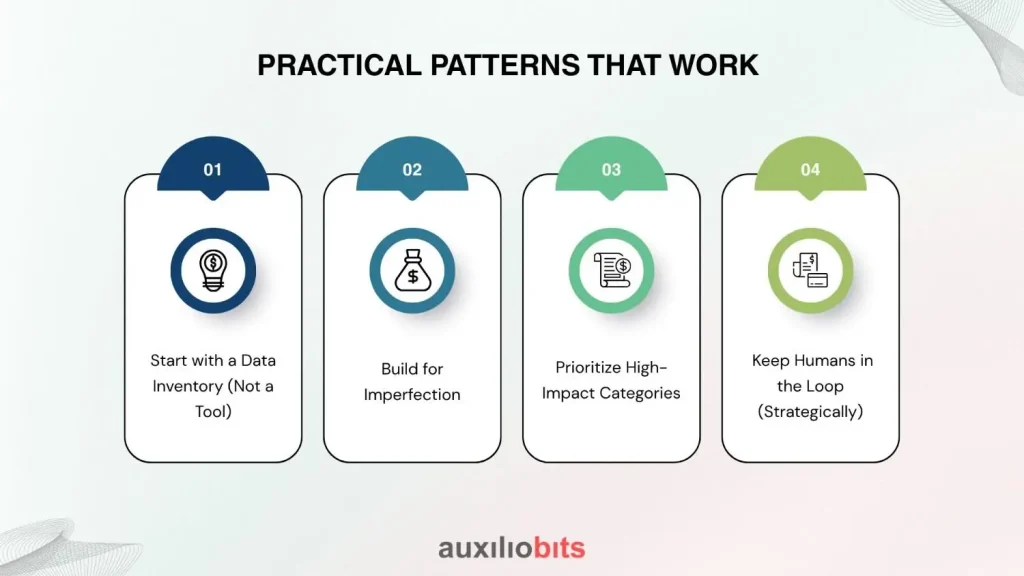

Practical Patterns That Work

After working across multiple implementations, a few patterns show up repeatedly—not as best practices, but as survival tactics.

1. Start with a Data Inventory (Not a Tool)

Before building pipelines, map

- Data sources

- Formats

- Owners

- Frequencies

It sounds basic. It almost never happens thoroughly.

2. Build for Imperfection

Assume:

- Data will be late

- Formats will change

- Some inputs will be missing

Design your carbon footprint automation pipeline to handle these gracefully:

- Flag uncertainties

- Allow overrides

- Maintain audit trails

Rigid systems break. Flexible ones adapt.

3. Prioritize High-Impact Categories

Not all emissions are equal.

Focus automation efforts on:

- Energy consumption (Scope 1 & 2)

- High-volume procurement categories

- Logistics

Leave niche categories for later. Or don’t automate them at all—initially.

4. Keep Humans in the Loop (Strategically)

Full automation sounds appealing. In practice:

- Validation often needs human judgment

- Exception handling benefits from context

The goal isn’t to eliminate human involvement—it’s to reduce low-value effort.

The Subtle Trade-Off: Accuracy vs. Timeliness

Here’s a truth: You can’t always have perfectly accurate, real-time carbon data.

Organizations end up choosing:

- Highly accurate, but delayed reporting

- Near real-time insights, with estimation layers

Neither is inherently better. It depends on the use case:

- Compliance favors accuracy

- Operational decisions favor timeliness

Good systems allow both—though not always elegantly.

Why Do Many Carbon Automation Projects Stall?

It’s rarely because of technology.

More common reasons:

- Over-ambitious scope (“let’s automate everything at once”)

- Underestimated data complexity

- Lack of cross-functional alignment

There’s also a subtle organizational hesitation. Carbon data exposes inefficiencies—energy waste, supplier gaps, and operational inconsistencies. Not every team is eager to surface that.

Automation, in this context, becomes as much a cultural shift as a technical one.

Where This Is Heading

There’s no sudden leap coming where carbon data becomes perfectly automated overnight.

What’s actually happening:

- Gradual standardization of supplier reporting

- Better integration between operational and sustainability systems

- Incremental improvements in data quality

And yes, more mature carbon footprint automation architectures—built on lessons learned the hard way.

A Final Thought

You don’t need perfect data to start automating. But you do need honest data.

Automating flawed inputs without acknowledging their limitations just produces faster inaccuracies. On the other hand, starting with partial—but transparent—data often leads to better long-term outcomes.

Multi-source ingestion will remain messy. That’s not a phase—it’s the nature of the problem.

The real differentiator isn’t who automates everything first. It’s about who builds systems that can handle the mess without collapsing under it.